university computervision week1 theory

Quantization

Source:

- HC1a Images and Interpolation

- https://rvdboomgaard.github.io/ComputerVision_LectureNotes/LectureNotes/IP/Images/index.html

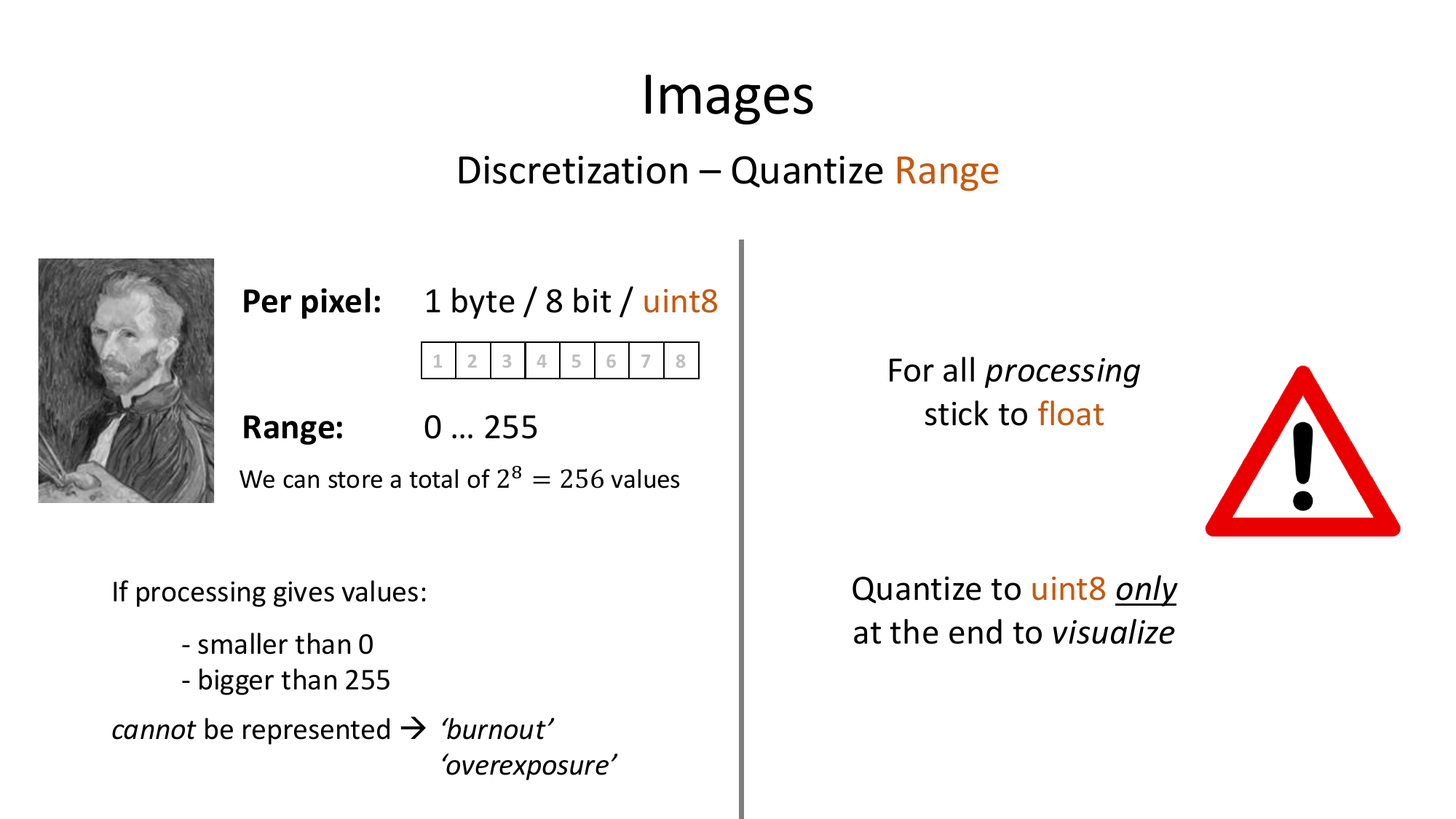

What quantization means

Quantization means:

The image intensity might be any real number in theory, but the computer stores only a finite set.

Standard grayscale case

For an 8-bit grayscale image:

That is:

possible values.

Standard color case

For RGB:

with 8 bits per channel, so:

- 1 byte for red

- 1 byte for green

- 1 byte for blue

Total:

The handwritten notes also mention that channel order depends on the framework.

For example, OpenCV often uses:

BGRinstead of RGB.

Why the lecture says “use float while processing”

During computations, values can go outside the display range:

- smaller than

- bigger than

If you keep them as uint8, bad things can happen:

- clipping

- overflow

- loss of information

So the lecture advice is:

- process in

float - convert to

uint8only when visualizing

Rescaling formula

If you want to map values in an array to the display range :

This stretches the minimum to and the maximum to .

Python

import numpy as np

f = np.array([20, 50, 120, 200], dtype=float)

g = (f - f.min()) / (f.max() - f.min()) * 255

g_uint8 = g.astype(np.uint8)

print(g)

print(g_uint8)Why order matters

The lecture warns that

h = 255 * (f - f.min()) / (f.max() - f.min())can be dangerous if f is still uint8.

Reason:

255 * (...)may happen while values are still stored in 8 bits- numbers above 255 can overflow

So the safe idea is:

- convert to float first

- then scale

The handwritten notes explain the subtle point here:

- the formulas are mathematically equivalent

- but code runs left to right

- if multiplication happens while values are still

uint8, intermediate overflow can happen before normalization finishes

Easy intuition

Quantization is just rounding the vertical axis of the image values.

Sampling discretizes where you look. Quantization discretizes what values you can store.